Hello Stefan and Rick! Thank you both for the detailed responses <3

Q0

Indeed. There are many open pipetting robot projects out there, and gathering around a more agnostic protocol programming framework seems important.

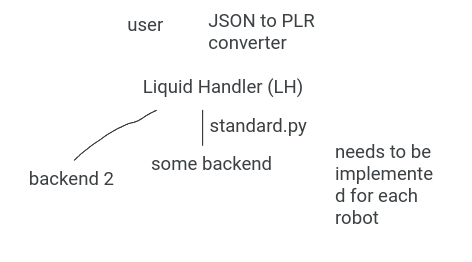

From what I interpret, each PLR protocol has a few lines loading the backend modules, specific to the robot, and the rest of the “syntax” (i.e. the way of using python to program protocols) is the hardware-agnostic part.

Q0.1: Correct?

Almost. I’ve been considering something like this:

[some GUI <->] Python syntax from OT2 ↔ hardware specific commands for your robot

This would totally avoid the issue of adapting OT2’s codebase to new hardware, which I agree would be hard.

And, in this way, the protocols in OT2’s database could be reused by anyone. This interoperability layer seemed great, because it bridges to the existing OT community.

Q0.2: Can PLR use OT2 protocols and their “context”?

I’ve invited some of them here, I hope they join this chat

Q1

I’ll need to study PLR more to understand this exactly. Every automation project, including ours, has come up with different terms for the same stuff.

That is great to hear!

Many of my hesitations come from this point. The robot we made can tool-change, and that adds so much flexibility to lab automation, that it becomes really hard for me to think about a general framework.

My best idea so far is to define (and grow) a list of atomic actions, starting with the ones for liquid handling with micropipettes, and slowly add others as hardware modules becomes available (e.g. “pick a colony”, “take a picture”, “spin the tubes”, etc.)

Any capability not provided by a particular back-end, should be delegated to a human (which is already what currently happens) or error out, but give it a chance of existing in the protocol anyways.

Q1.1: Would implementing this in PLR make sense?

Eventually, if a robot gains a missing capability, less protocol re-programming would be needed.

It won’t be hard to add location parameters to the underlying functions.

The “atomic” actions we have defined so far are: HOME, PICK_TIP, LOAD_LIQUID, DROP_LIQUID, DISCARD_TIP, PIPETTE, COMMENT, HUMAN, WAIT.

Tool-change is handled automatically by our module, because each action specifies which pipette or tool must be grabbed from the parking posts.

I’d need some more guidance and experimentation to learn exactly which information is stored and passed by PLR, and how. I expect this to take more time than changing our code.

Another aspect I might have missed before is about resources.

In our current setup, the GUI populates a Mongo database with every definition (which are all JSON essentially), and then passes only a protocol name to the machine controller.

Q1.2: Can PLR store or load resource definitions from a database, or files?

Q1.3: Would this final layout make sense?

[Our GUI] <-> [PLR + PLR Backend] <-> [slicer + controller specific to a robot]

I can see that there’s been great effort in documenting PLR, and congrats on that. I’ll have a better look around considering what you explained, and come back if I fail to make progress.

And finally…

Q2.1: On the other hand, if you’re interested, we could setup a brief call to better outline development, and map our project’s components to PLR. I’d be glad to contribute to the contributing guide.

Sorry for the long post, and thanks again for your help and the amazing effort!

Best!

Nico

PS: Here’s our project Open Lab Automata / Pipetting Bot · GitLab